It’s important to take a defensive stance, and practice question inversion when using an LLM to build code. They don’t (yet) possess the higher reasoning and broad multi-mode testing ability required to make the big leaps that allow for edge case exploitation, they often lie about what they’ve done (not intentionally, but they generate post-hoc explanations for actions which are not always accurate), and they turn out code which is on-par with a junior engineer unless guided very specifically. Questioning the work they perform, especially in a new instance where they don’t have the previous session context immediately available, is an important step in producing good production code.

I’d been building a Web3 development skill over several sessions—a reference document covering smart contract security, debugging patterns, and cross-chain architecture. Three sessions in, the skill was stable and working. But “working” isn’t the same as “complete.”

Security in software development is one of those domains where you don’t know what you don’t know. I could have continued adding features and hoped I’d covered the important parts. Instead, I asked Claude to audit its own work.

The Audit Prompt

After rebuilding context from the skill’s project files, I posed a simple question:

Reviewing the aspects of the skill that we've created, and known vulnerability types, are there any blind spots that you can identify that should fundamentally be a part of the web3 skill?This prompt does something specific: it gives Claude a lens to evaluate against. Not “is this good?” but “given what we know about security vulnerabilities, what’s missing?” The domain knowledge (known vulnerability types) combined with the specific artifact (the skill we created) produces a focused analysis rather than generic suggestions.

The retroactive audit prompt pattern: “Reviewing [what we’ve created], and [known domain considerations], are there any blind spots?” This works for any multi-session project—security audits, feature completeness, documentation coverage.

Claude didn’t just answer from memory. It viewed the existing files—the historical hacks index, the SKILL.md, the three-S framework document—to verify what was actually covered before claiming anything was missing. This verification-first behavior meant the gap analysis was grounded in reality rather than assumptions.

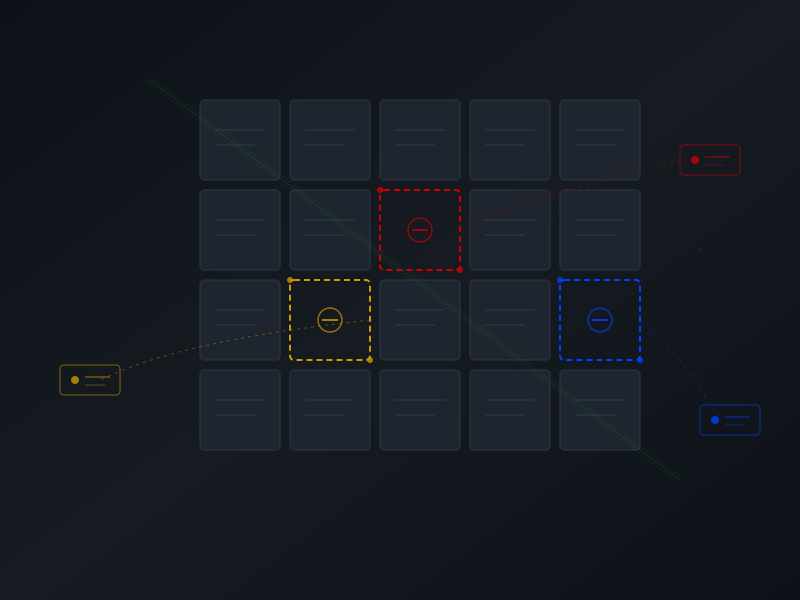

The result: six identified blind spots, each with real exploit examples. Frontend/dApp security (critical), supply chain attacks (critical), MEV considerations (high), operational security (high), infrastructure concerns (medium), economic mechanism design (medium). Concrete gaps with concrete severity ratings.

Before Committing to the Fix

My instinct was to start adding content immediately. But I had a question first:

I also know that the more robust a skill is, the more "weight" it adds to the context window in file size. At what point in the process of invoking a skill is that file weight loaded into the context window, and how can I evaluate an effective tradeoff in total skill file size versus concerns over breaking the session by invoking a skill?This is the kind of question that’s easy to skip when you’re eager to make progress. But understanding the mechanics before committing to a solution prevents wasted work.

Asking “before we do that…” questions correlates with cleaner sessions. The few minutes spent understanding constraints often saves iterations later.

Claude’s answer was illuminating—and came with verification. Rather than stating assumptions about how skills load, Claude examined the directory structure and tested write permissions to confirm the mechanics:

Only skill metadata (~500 tokens) is always loaded. The SKILL.md file and any references load on-demand when Claude views them. Progressive loading means file count matters less than you’d think—separate files preserve the loading efficiency.

Skill loading is progressive: metadata always present, content loaded on-demand. This means splitting content into separate reference files is better than building a monolithic SKILL.md—you preserve the ability to load only what’s needed for a given session.

One More Question

I still wasn’t ready to proceed. I’d created skills before, but I’d never updated an existing one:

Before we do that, what is the process of updating a skill? Typically in Claude Desktop, you give me the option to "Copy to Skills" when I complete skill creation, but I have not updated an existing skill yet.Again, Claude verified rather than assumed—testing write permissions to the skill directory directly. The answer: direct modification works. Changes via str_replace and create_file are immediately active. No “Copy to Skills” step needed for updates.

Two clarifying questions. Maybe ninety seconds of conversation. But now I understood the constraints (progressive loading favors separate files) and the mechanism (direct edits work). The implementation could proceed without surprises.

The Implementation

With mechanics understood, I approved the priority additions:

Yes, let's proceed with those priority files.Claude created three reference files directly in the skill directory: frontend-security.md (14K), supply-chain-security.md (14K), and mev-protection.md (17K). The SKILL.md was updated with new triggers and scenarios pointing to these references.

Eight exchanges total. Three new reference files. Zero corrections needed.

Test capabilities rather than assuming them. Claude’s verification-first approach—viewing files before claiming coverage, testing permissions before claiming write access—is a pattern worth adopting in your own prompting. Ask Claude to verify before acting.

The Pattern

The retroactive audit isn’t limited to security reviews or skill development. Any multi-session project accumulates assumptions and blind spots. The pattern works anywhere:

“Reviewing our API design and REST best practices, are there any gaps?”

“Given common UX anti-patterns, what might we have missed in this interface?”

“Looking at our test coverage and typical failure modes, where are we exposed?”

The key elements: reference what exists (the artifact), provide a domain lens (known considerations), and ask specifically for gaps (not general feedback). Claude can evaluate its own prior output against external criteria—but only if you frame the question to enable that evaluation.

This session produced no corrections because it asked the right questions before taking action. The audit prompt surfaced real gaps. The mechanics questions prevented architectural mistakes. The permissions check confirmed the update path.

Sometimes the most productive thing you can do is pause and ask what you might be missing.